Proper quotation: « The Philosophical Concept of Algorithmic Intelligence », Spanda Journal special issue on “Collective Intelligence”, V (2), December 2014, p. 17-25. The original text can be found for free online at Spanda

“Transcending the media, airborne machines will announce the voice of the many. Still indiscernible, cloaked in the mists of the future, bathing another humanity in its murmuring, we have a rendezvous with the over-language.” Collective Intelligence, 1994, p. xxviii.

Twenty years after Collective Intelligence

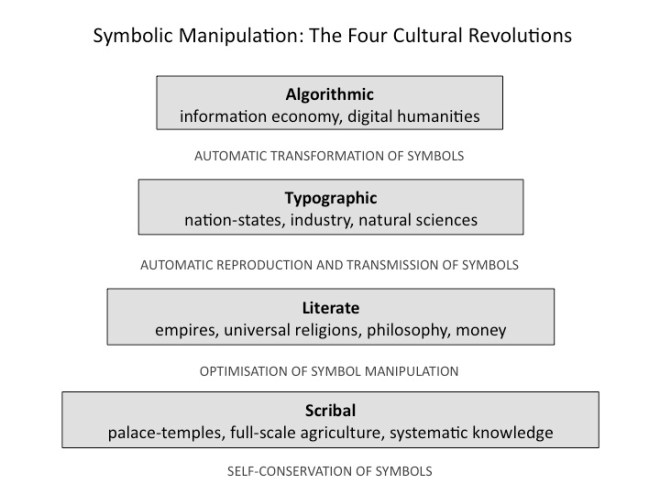

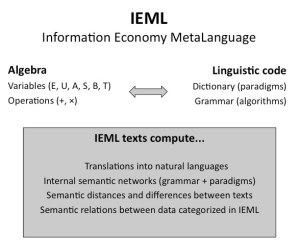

This paper was written in 2014, twenty years after L’intelligence collective [the original French edition of Collective Intelligence].[2] The main purpose of Collective Intelligence was to formulate a vision of a cultural and social evolution that would be capable of making the best use of the new possibilities opened up by digital communication. Long before the success of social networks on the Web,[3] I predicted the rise of “engineering the social bond.” Eight years before the founding of Wikipedia in 2001, I imagined an online “cosmopedia” structured in hypertext links. When the digital humanities and the social media had not even been named, I was calling for an epistemological and methodological transformation of the human sciences. But above all, at a time when less than one percent of the world’s population was connected,[4] I was predicting (along with a small minority of thinkers) that the Internet would become the centre of the global public space and the main medium of communication, in particular for the collaborative production and sharing of knowledge and the dissemination of news.[5] In spite of the considerable growth of interactive digital communication over the past twenty years, we are still far from the ideal described in Collective Intelligence. It seemed to me already in 1994 that the anthropological changes under way would take root and inaugurate a new phase in the human adventure only if we invented what I then called an “over-language.” How can communication readily reach across the multiplicity of dialects and cultures? How can we map the deluge of digital data, order it around our interests and extract knowledge from it? How can we master the waves, currents and depths of the software ocean? Collective Intelligence envisaged a symbolic system capable of harnessing the immense calculating power of the new medium and making it work for our benefit. But the over-language I foresaw in 1994 was still in the “indiscernible” period, shrouded in “the mists of the future.” Twenty years later, the curtain of mist has been partially pierced: the over-language now has a name, IEML (acronym for Information Economy MetaLanguage), a grammar and a dictionary.[6]

Reflexive collective intelligence

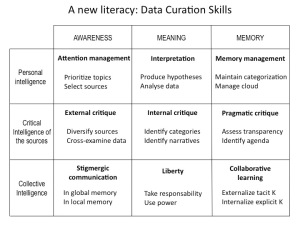

Collective intelligence drives human development, and human development supports the growth of collective intelligence. By improving collective intelligence we can place ourselves in this feedback loop and orient it in the direction of a self-organizing virtuous cycle. This is the strategic intuition that has guided my research. But how can we improve collective intelligence? In 1994, the concept of digital collective intelligence was still revolutionary. In 2014, this term is commonly used by consultants, politicians, entrepreneurs, technologists, academics and educators. Crowdsourcing has become a common practice, and knowledge management is now supported by the decentralized use of social media. The interconnection of humanity through the Internet, the development of the knowledge economy, the rush to higher education and the rise of cloud computing and big data are all indicators of an increase in our cognitive power. But we have yet to cross the threshold of reflexive collective intelligence. Just as dancers can only perfect their movements by reflecting them in a mirror, just as yogis develop awareness of their inner being only through the meditative contemplation of their own mind, collective intelligence will only be able to set out on the path of purposeful learning and thus move on to a new stage in its growth by achieving reflexivity. It will therefore need to acquire a mirror that allows it to observe its own cognitive processes. Be careful! Collective intelligence does not and will not have autonomous consciousness: when I talk about reflexive collective intelligence, I mean that human individuals will have a clearer and better-shared knowledge than they have today of the collective intelligence in which they participate, a knowledge based on transparent principles and perfectible scientific methods.

The key: A complete modelling of language

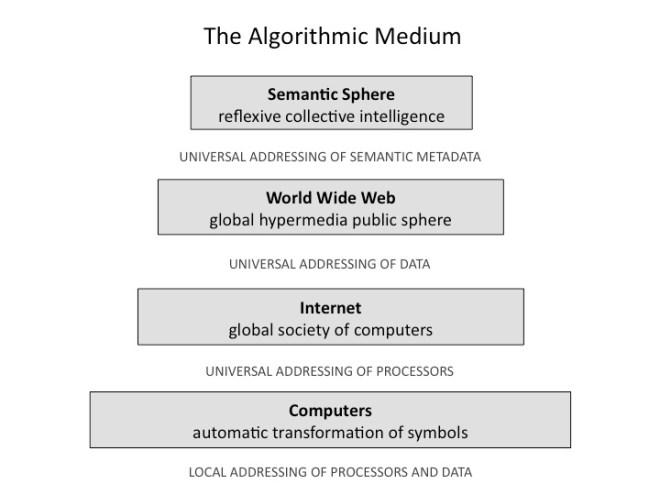

But how can a mirror of collective intelligence be constructed? It is clear that the context of reflection will be the algorithmic medium or, to put it another way, the Internet, the calculating power of cloud computing, ubiquitous communication and distributed interactive mobile interfaces. Since we can only reflect collective intelligence in the algorithmic medium, we must yield to the nature of that medium and have a calculable model of our intelligence, a model that will be fed by the flows of digital data from our activities. In short, we need a mathematical (with calculable models) and empirical (based on data) science of collective intelligence. But, once again, is such a science possible? Since humanity is a species that is highly social, its intelligence is intrinsically social, or collective. If we had a mathematical and empirical science of human intelligence in general, we could no doubt derive a science of collective intelligence from it. This leads us to a major problem that has been investigated in the social sciences, the human sciences, the cognitive sciences and artificial intelligence since the twentieth century: is a mathematized science of human intelligence possible? It is language or, to put it another way, symbolic manipulation that distinguishes human cognition. We use language to categorize sensory data, to organize our memory, to think, to communicate, to carry out social actions, etc. My research has led me to the conclusion that a science of human intelligence is indeed possible, but on the condition that we solve the problem of the mathematical modelling of language. I am speaking here of a complete scientific modelling of language, one that would not be limited to the purely logical and syntactic aspects or to statistical correlations of corpora of texts, but would be capable of expressing semantic relationships formed between units of meaning, and doing so in an algebraic, generative mode.[7] Convinced that an algebraic model of semantics was the key to a science of intelligence, I focused my efforts on discovering such a model; the result was the invention of IEML.[8] IEML—an artificial language with calculable semantics—is the intellectual technology that will make it possible to find answers to all the above-mentioned questions. We now have a complete scientific modelling of language, including its semantic aspects. Thus, a science of human intelligence is now possible. It follows, then, that a mathematical and empirical science of collective intelligence is possible. Consequently, a reflexive collective intelligence is in turn possible. This means that the acceleration of human development is within our reach.

The scientific file: The Semantic Sphere

I have written two volumes on my project of developing the scientific framework for a reflexive collective intelligence, and I am currently writing the third. This trilogy can be read as the story of a voyage of discovery. The first volume, The Semantic Sphere 1 (2011),[9] provides the justification for my undertaking. It contains the statement of my aims, a brief intellectual autobiography and, above all, a detailed dialogue with my contemporaries and my predecessors. With a substantial bibliography,[10] that volume presents the main themes of my intellectual process, compares my thoughts with those of the philosophical and scientific tradition, engages in conversation with the research community, and finally, describes the technical, epistemological and cultural context that motivated my research. Why write more than four hundred pages to justify a program of scientific research? For one very simple reason: no one in the contemporary scientific community thought that my research program had any chance of success. What is important in computer science and artificial intelligence is logic, formal syntax, statistics and biological models. Engineers generally view social sciences such as sociology or anthropology as nothing but auxiliary disciplines limited to cosmetic functions: for example, the analysis of usage or the experience of users. In the human sciences, the situation is even more difficult. All those who have tried to mathematize language, from Leibniz to Chomsky, to mention only the greatest, have failed, achieving only partial results. Worse yet, the greatest masters, those from whom I have learned so much, from the semiologist Umberto Eco[11] to the anthropologist Levi-Strauss,[12] have stated categorically that the mathematization of language and the human sciences is impracticable, impossible, utopian. The path I wanted to follow was forbidden not only by the habits of engineers and the major authorities in the human sciences but also by the nearly universal view that “meaning depends on context,”[13] unscrupulously confusing mathematization and quantification, denouncing on principle, in a “knee jerk” reaction, the “ethnocentric bias” of any universalist approach[14] and recalling the “failure” of Esperanto.[15] I have even heard some of the most agnostic speak of the curse of Babel. It is therefore not surprising that I want to make a strong case in defending the scientific nature of my undertaking: all explorers have returned empty-handed from this voyage toward mathematical language, if they returned at all.

The metalanguage: IEML

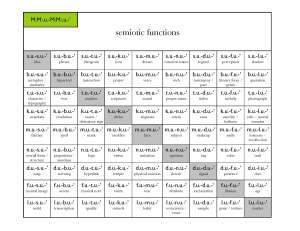

But one cannot go on forever announcing one’s departure on a voyage: one must set forth, navigate . . . and return. The second volume of my trilogy, La grammaire d’IEML,[16] contains the very technical account of my journey from algebra to language. In it, I explain how to construct sentences and texts in IEML, with many examples. But that 150-page book also contains 52 very dense pages of algorithms and mathematics that show in detail how the internal semantic networks of that artificial language can be calculated and translated automatically into natural languages. To connect a mathematical syntax to a semantics in natural languages, I had to, almost single-handed,[17] face storms on uncharted seas, to advance across the desert with no certainty that fertile land would be found beyond the horizon, to wander for twenty years in the convoluted labyrinth of meaning. But by gradually joining sign, being and thing in turn in the sense of the virtual and actual, I finally had my Ariadne’s thread, and I made a map of the labyrinth, a complicated map of the metalanguage, that “Northwest Passage”[18] where the waters of the exact sciences and the human sciences converged. I had set my course in a direction no one considered worthy of serious exploration since the crossing was thought impossible. But, against all expectations, my journey reached its goal. The IEML Grammar is the scientific proof of this. The mathematization of language is indeed possible, since here is a mathematical metalanguage. What is it exactly? IEML is an artificial language with calculable semantics that puts no limits on the possibilities for the expression of new meanings. Given a text in IEML, algorithms reconstitute the internal grammatical and semantic network of the text, translate that network into natural languages and calculate the semantic relationships between that text and the other texts in IEML. The metalanguage generates a huge group of symmetric transformations between semantic networks, which can be measured and navigated at will using algorithms. The IEML Grammar demonstrates the calculability of the semantic networks and presents the algorithmic workings of the metalanguage in detail. Used as a system of semantic metadata, IEML opens the way to new methods for analyzing large masses of data. It will be able to support new forms of translinguistic hypertextual communication in social media, and will make it possible for conversation networks to observe and perfect their own collective intelligence. For researchers in the human sciences, IEML will structure an open, universal encyclopedic library of multimedia data that reorganizes itself automatically around subjects and the interests of its users.

A new frontier: Algorithmic Intelligence

Having mapped the path I discovered in La grammaire d’IEML, I will now relate what I saw at the end of my journey, on the other side of the supposedly impassable territory: the new horizons of the mind that algorithmic intelligence illuminates. Because IEML is obviously not an end in itself. It is only the necessary means for the coming great digital civilization to enable the sun of human knowledge to shine more brightly. I am talking here about a future (but not so distant) state of intelligence, a state in which capacities for reflection, creation, communication, collaboration, learning, and analysis and synthesis of data will be infinitely more powerful and better distributed than they are today. With the concept of Algorithmic Intelligence, I have completed the risky work of prediction and cultural creation I undertook with Collective Intelligence twenty years ago. The contemporary algorithmic medium is already characterized by digitization of data, automated data processing in huge industrial computing centres, interactive mobile interfaces broadly distributed among the population and ubiquitous communication. We can make this the medium of a new type of knowledge—a new episteme[19]—by adding a system of semantic metadata based on IEML. The purpose of this paper is precisely to lay the philosophical and historical groundwork for this new type of knowledge.

Philosophical genealogy of algorithmic intelligence

The three ages of reflexive knowledge

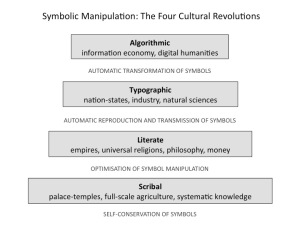

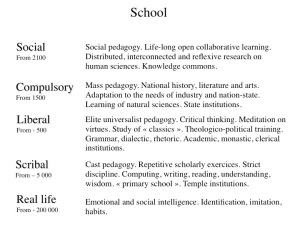

Since my project here involves a reflexive collective intelligence, I would like to place the theme of reflexive knowledge in its historical and philosophical context. As a first approximation, reflexive knowledge may be defined as knowledge knowing itself. “All men by nature desire to know,” wrote Aristotle, and this knowledge implies knowledge of the self.[20] Human beings have no doubt been speculating about the forms and sources of their own knowledge since the dawn of consciousness. But the reflexivity of knowledge took a decisive step around the middle of the first millennium BCE,[21] during the period when the Buddha, Confucius, the Hebrew prophets, Socrates and Zoroaster (in alphabetical order) lived. These teachers involved the entire human race in their investigations: they reflected consciousness from a universal perspective. This first great type of systematic research on knowledge, whether philosophical or religious, almost always involved a divine ideal, or at least a certain “relation to Heaven.” Thus we may speak of a theosophical age of reflexive knowledge. I will examine the Aristotelian lineage of this theosophical consciousness, which culminated in the concept of the agent intellect. Starting in the sixteenth century in Europe—and spreading throughout the world with the rise of modernity—there was a second age of reflection on knowledge, which maintained the universal perspective of the previous period but abandoned the reference to Heaven and confined itself to human knowledge, with its recognized limits but also its rational ideal of perfectibility. This was the second age, the scientific age, of reflexive knowledge. Here, the investigation follows two intertwined paths: one path focusing on what makes knowledge possible, the other on what limits it. In both cases, knowledge must define its transcendental subject, that is, it must discover its own determinations. There are many signs in 2014 indicating that in the twenty-first century—around the point where half of humanity is connected to the Internet—we will experience a third stage of reflexive knowledge. This “version 3.0” will maintain the two previous versions’ ideals of universality and scientific perfectibility but will be based on the intensive use of technology to augment and reflect systematically our collective intelligence, and therefore our capacities for personal and social learning. This is the coming technological age of reflexive knowledge with its ideal of an algorithmic intelligence. The brief history of these three modalities—theosophical, scientific and technological—of reflexive knowledge can be read as a philosophical genealogy of algorithmic intelligence.

The theosophical age and its agent intellect

A few generations earlier, Socrates might have been a priest in the circle around the Pythia; he had taken the famous maxim “Know thyself” from the Temple of Apollo at Delphi. But in the fifth century BCE in Athens, Socrates extended the Delphic injunction in an unexpected way, introducing dialectical inquiry. He asked his contemporaries: What do you think? Are you consistent? Can you justify what you are saying about courage, justice or love? Could you repeat it seriously in front of a little group of intelligent or curious citizens? He thus opened the door to a new way of knowing one’s own knowledge, a rational expansion of consciousness of self. His main disciple, Plato, followed this path of rigorous questioning of the unthinking categorization of reality, and finally discovered the world of Ideas. Ideas for Plato are intellectual forms that, unlike the phenomena they categorize, do not belong to the world of Becoming. These intelligible forms are the original essences, archetypes beyond reality, which project into phenomenal time and space all those things that seem to us to be truly real because they are tangible, but that are actually only pale copies of the Ideas. We would say today that our experience is mainly determined by our way of categorizing it. Plato taught that humanity can only know itself as an intelligent species by going back to the world of Ideas and coming into contact with what explains and motivates its own knowledge. Aristotle, who was Plato’s student and Alexander the Great’s tutor, created a grand encyclopedic synthesis that would be used as a model for eighteen centuries in a multitude of cultures. In it, he integrates Plato’s discovery of Ideas with the sum of knowledge of his time. He places at the top of his hierarchical cosmos divine thought knowing itself. And in his Metaphysics,[22] he defines the divinity as “thought thinking itself.” This supreme self-reflexive thought was for him the “prime mover” that inspires the eternal movement of the cosmos. In De Anima,[23] his book on psychology and the theory of knowledge, he states that, under the effect of an agent intellect separate from the body, the passive intellect of the individual receives intelligible forms, a little like the way the senses receive sensory forms. In thinking these intelligible forms, the passive intellect becomes one with its objects and, in so doing, knows itself. Starting from the enigmatic propositions of Aristotle’s theology and psychology, a whole lineage of Peripatetic and Neo-Platonic philosophers—first “pagans,” then Muslims, Jews and Christians—developed the discipline of noetics, which speculates on the divine intelligence, its relation to human intelligence and the type of reflexivity characteristic of intelligence in general.[24] According to the masters of noetics, knowledge can be conceptually divided into three aspects that, in reality, are indissociable and complementary:

- the intellect,or the knowing subject

- the intelligence,or the operation of the subject

- the intelligible,or what is known—or can be known—by the subject by virtue of its operation

From a theosophical perspective, everything that happens takes place in the unity of a self-reflexive divine thought, or (in the Indian tradition) in the consciousness of an omniscient Brahman or Buddha, open to infinity. In the Aristotelian tradition, Avicenna, Maimonides and Albert the Great considered that the identity of the intellect, the intelligence and the intelligible was achieved eternally in God, in the perfect reflexivity of thought thinking itself. In contrast, it was clear to our medieval theosophists that in the case of human beings, the three aspects of knowledge were neither complete nor identical. Indeed, since the passive intellect knows itself only through the intermediary of its objects, and these objects are constantly disappearing and being replaced by others, the reflexive knowledge of a finite human being can only be partial and transitory. Ultimately, human knowledge could know itself only if it simultaneously knew, completely and enduringly, all its objects. But that, obviously, is reserved only for the divinity. I should add that the “one beyond the one” of the neo-Platonist Plotinus and the transcendent deity of the Abrahamic traditions are beyond the reach of the human mind. That is why our theosophists imagined a series of mediations between transcendence and finitude. In the middle of that series, a metaphysical interface provides communication between the unimaginable and inaccessible deity and mortal humanity dispersed in time and space, whose living members can never know—or know themselves—other than partially. At this interface, we find the agent intellect, which is separate from matter in Aristotle’s psychology. The agent intellect is not limited—in the realm of time—to sending the intelligible categories that inform the human passive intellect; it also determines—in the realm of eternity—the maximum limit of what the human race can receive of the universal and perfectly reflexive knowledge of the divine. That is why, according to the medieval theosophists, the best a mortal intelligence can do to approach complete reflexive knowledge is to contemplate the operation in itself of the agent intellect that emanates from above and go back to the source through it. In accordance with this regulating ideal of reflexive knowledge, living humanity is structured hierarchically, because human beings are more or less turned toward the illumination of the agent intellect. At the top, prophets and theosophists receive a bright light from the agent intellect, while at the bottom, human beings turned toward coarse material appetites receive almost nothing. The influx of intellectual forms is gradually obscured as we go down the scale of degree of openness to the world above.

The scientific age and its transcendental subject

With the European Renaissance, the use of the printing press, the construction of new observation instruments, and the development of mathematics and experimental science heralded a new era. Reflection on knowledge took a critical turn with Descartes’s introduction of radical doubt and the scientific method, in accordance with the needs of educated Europe in the seventeenth century. God was still present in the Cartesian system, but He was only there, ultimately, to guarantee the validity of the efforts of human scientific thought: “God is not a deceiver.”[25] The fact remains that Cartesian philosophy rests on the self-reflexive edge, which has now moved from the divinity to the mortal human: “I think, therefore I am.”[26] In the second half of the seventeenth century, Spinoza and Leibniz received the critical scientific rationalism developed by Descartes, but they were dissatisfied with his dualism of thought (mind) and extension (matter). They therefore attempted, each in his own way, to constitute reflexive knowledge within the framework of coherent monism. For Spinoza, nature (identified with God) is a unique and infinite substance of which thought and extension are two necessary attributes among an infinity of attributes. This strict ontological monism is counterbalanced by a pluralism of expression, because the unique substance possesses an infinity of attributes, and each attribute, an infinity of modes. The summit of human freedom according to Spinoza is the intellectual love of God, that is, the most direct and intuitive possible knowledge of the necessity that moves the nature to which we belong. For Leibniz, the world is made up of monads, metaphysical entities that are closed but are capable of an inner perception in which the whole is reflected from their singular perspective. The consistency of this radical pluralism is ensured by the unique, infinite divine intelligence that has considered all possible worlds in order to create the best one, which corresponds to the most complex—or the richest—of the reciprocal reflections of the monads. As for human knowledge—which is necessarily finite—its perfection coincides with the clearest possible reflection of a totality that includes it but whose unity is thought only by the divine intelligence. After Leibniz and Spinoza, the eighteenth century saw the growth of scientific research, critical thought and the educational practices of the Enlightenment, in particular in France and the British Isles. The philosophy of the Enlightenment culminated with Kant, for whom the development of knowledge was now contained within the limits of human reason, without reference to the divinity, even to envelop or guarantee its reasoning. But the ideal of reflexivity and universality remained. The issue now was to acquire a “scientific” knowledge of human intelligence, which could not be done without the representation of knowledge to itself, without a model that would describe intelligence in terms of what is universal about it. This is the purpose of Kantian transcendental philosophy. Here, human intelligence, armed with its reason alone, now faces only the phenomenal world. Human intelligence and the phenomenal world presuppose each other. Intelligence is programmed to know sensory phenomena that are necessarily immersed in space and time. As for phenomena, their main dimensions (space, time, causality, etc.) correspond to ways of perceiving and understanding that are specific to human intelligence. These are forms of the transcendental subject and not intrinsic characteristics of reality. Since we are confined within our cognitive possibilities, it is impossible to know what things are “in themselves.” For Kant, the summit of reflexive human knowledge is in a critical awareness of the extension and the limits of our possibility of knowing. Descartes, Spinoza, Leibniz, the English and French Enlightenment, and Kant accomplished a great deal in two centuries, and paved the way for the modern philosophy of the nineteenth and twentieth centuries. A new form of reflexive knowledge grew, spread, and fragmented into the human sciences, which mushroomed with the end of the monopoly of theosophy. As this dispersion occurred, great philosophers attempted to grasp reflexive knowledge in its unity. The reflexive knowledge of the scientific era neither suppressed nor abolished reflexive knowledge of the theosophical type, but it opened up a new domain of legitimacy of knowledge, freed of the ideal of divine knowledge. This de jure separation did not prevent de facto unions, since there was no lack of religious scholars or scholarly believers. Modern scientists could be believers or non-believers. Their position in relation to the divinity was only a matter of motivation. Believers loved science because it revealed the glory of the divinity, and non-believers loved it because it explained the world without God. But neither of them used as arguments what now belonged only to their private convictions. In the human sciences, there were systematic explorations of the determinations of human existence. And since we are thinking beings, the determinations of our existence are also those of our thought. How do the technical, historical, economic, social and political conditions in which we live form, deform and set limits on our knowledge? What are the structures of our biology, our language, our symbolic systems, our communicative interactions, our psychology and our processes of subjectivation? Modern thought, with its scientific and critical ideal, constantly searches for the conditions and limits imposed on it, particularly those that are as yet unknown to it, that remain in the shadows of its consciousness. It seeks to discover what determines it “behind its back.” While the transcendental subject described by Kant in his Critique of Pure Reason fixed the image a great mind had of it in the late eighteenth century, modern philosophy explores a transcendental subject that is in the process of becoming, continually being re-examined and more precisely defined by the human sciences, a subject immersed in the vagaries of cultures and history, emerging from its unconscious determinations and the techno-symbolic mechanisms that drive it. I will now broadly outline the figure of the transcendental subject of the scientific era, a figure that re-examines and at the same time transforms the three complementary aspects of the agent intellect.

- The Aristotelian intellect becomes living intelligence. This involves the effective cognitive activities of subjects, what is experienced spontaneously in time by living, mortal human beings.

- The intelligence becomes scientific investigation. I use this term to designate all undertakings by which the living intelligence becomes scientifically intelligible, including the technical and symbolic tools, the methods and the disciplines used in those undertakings.

- The intelligible becomes the intelligible intelligence, which is the image of the living intelligence that is produced through scientific and critical investigation.

An evolving transcendental subject emerges from this reflexive cycle in which the living intelligence contemplates its own image in the form of a scientifically intelligible intelligence. Scientific investigation here is the internal mirror of the transcendental subjectivity, the mediation through which the living intelligence observes itself. It is obviously impossible to confuse the living intelligence and its scientifically intelligible image, any more than one can confuse the map and the territory, or the experience and its description. Nor can one confuse the mirror (scientific investigation) with the being reflected in it (the living intelligence), nor with the image that appears in the mirror (the intelligible intelligence). These three aspects together form a dynamic unit that would collapse if one of them were eliminated. While the living intelligence would continue to exist without a mirror or scientific image, it would be very much diminished. It would have lost its capacity to reflect from a universal perspective. The creative paradox of the intellectual reflexivity of the scientific age may be formulated as follows. It is clear, first of all, that the living intelligence is truly transformed by scientific investigation, since the living intelligence that knows its image through a certain scientific investigation is not the same (does not have the same experience) as the one that does not know it, or that knows another image, the result of another scientific investigation. But it is just as clear, by definition, that the living intelligence reflects itself in the intelligible image presented to it through scientific knowledge. In other words, the living intelligence is equally dependent on the scientific and critical investigation that produces the intelligible image in which it is reflected. When we observe our physical appearance in a mirror, the image in the mirror in no way changes our physical appearance, only the mental representation we have of it. However, the living intelligence cannot discover its intelligible image without including the reflexive process itself in its experience, and without at the same time being changed. In short, a critical science that explores the limits and determinations of the knowing subject does not only reflect knowledge—it increases it. Thus the modern transcendental subject is—by its very nature—evolutionary, participating in a dynamic of growth. In line with this evolutionary view of the scientific age, which contrasts with the fixity of the previous age, the collectivity that possesses reflexive knowledge is no longer a theosophical hierarchy oriented toward the agent intellect but a republic of letters oriented toward the augmentation of human knowledge, a scientific community that is expanding demographically and is organized into academies, learned societies and universities. While the agent intellect looked out over a cosmos emanating from eternity, in analog resonance with the human microcosm, the transcendental subject explores a universe infinitely open to scientific investigation, technical mastery and political liberation.

The technological age and its algorithmic intelligence

Reflexive knowledge has, in fact, always been informed by some technology, since it cannot be exercised without symbolic tools and thus the media that support those tools. But the next age of reflexive knowledge can properly be called technological because the technical augmentation of cognition is explicitly at the centre of its project. Technology now enters the loop of reflexive consciousness as the agent of the acceleration of its own augmentation. This last point was no doubt glimpsed by a few pre–twentieth century philosophers, such as Condorcet in the eighteenth century, in his posthumous book of 1795, Sketch for a Historical Picture of the Progress of the Human Mind. But the truly technological dimension of reflexive knowledge really began to be thought about fully only in the twentieth century, with Pierre Teilhard de Chardin, Norbert Wiener and Marshall McLuhan, to whom we should also add the modest genius Douglas Engelbart. The regulating ideal of the reflexive knowledge of the theosophical age was the agent intellect, and that of the scientific-critical age was the transcendental subject. In continuity with the two preceding periods, the reflexive knowledge of the technological age will be organized around the ideal of algorithmic intelligence, which inherits from the agent intellect its universality or, in other words, its capacity to unify humanity’s reflexive knowledge. It also inherits its power to be reflected in finite intelligences. But, in contrast with the agent intellect, instead of descending from eternity, it emerges from the multitude of human actions immersed in space and time. Like the transcendental subject, algorithmic intelligence is rational, critical, scientific, purely human, evolutionary and always in a state of learning. But the vocation of the transcendental subject was to reflexively contain the human universe. However, the human universe no longer has a recognizable face. The “death of man” announced by Foucault[27] should be understood in the sense of the loss of figurability of the transcendental subject. The labyrinth of philosophies, methodologies, theories and data from the human sciences has become inextricably complicated. The transcendental subject has not only been dissolved in symbolic structures or anonymous complex systems, it is also fragmented in the broken mirror of the disciplines of the human sciences. It is obvious that the technical medium of a new figure of reflexive knowledge will be the Internet, and more generally, computer science and ubiquitous communication. But how can symbol-manipulating automata be used on a large scale not only to reunify our reflexive knowledge but also to increase the clarity, precision and breadth of the teeming diversity enveloped by our knowledge? The missing link is not only technical, but also scientific. We need a science that grasps the new possibilities offered by technology in order to give collective intelligence the means to reflect itself, thus inaugurating a new form of subjectivity. As the groundwork of this new science—which I call computational semantics—IEML makes use of the self-reflexive capacity of language without excluding any of its functions, whether they be narrative, logical, pragmatic or other. Computational semantics produces a scientific image of collective intelligence: a calculated intelligence that will be able to be explored both as a simulated world and as a distributed augmented reality in physical space. Scientific change will generate a phenomenological change,[28] since ubiquitous multimedia interaction with a holographic image of collective intelligence will reorganize the human sensorium. The last, but not the least, change: social change. The community that possessed the previous figure of reflexive knowledge was a scientific community that was still distinct from society as a whole. But in the new figure of knowledge, reflexive collective intelligence emerges from any human group. Like the previous figures—theosophical and scientific—of reflexive knowledge, algorithmic intelligence is organized in three interdependent aspects.

- Reflexive collective intelligence represents the living intelligence, the intellect or soul of the great future digital civilization. It may be glimpsed by deciphering the signs of its approach in contemporary reality.

- Computational semantics holds up a technical and scientific mirror to collective intelligence, which is reflected in it. Its purpose is to augment and reflect the living intelligence of the coming civilization.

- Calculated intelligence, finally, is none other than the scientifically knowable image of the living intelligence of digital civilization. Computational semantics constructs, maintains and cultivates this image, which is that of an ecosystem of ideas coming out of the human activity in the algorithmic medium and can be explored in sensory-motor mode.

In short, in the emergent unity of algorithmic intelligence, computational semantics calculates the cognitive simulation that augments and reflects the collective intelligence of the coming civilization.

[1] Professor at the University of Ottawa

[2] And twenty-three years after L’idéographie dynamique (Paris: La Découverte, 1991).

[3] And before the WWW itself, which would become a public phenomenon only in 1994 with the development of the first browsers such as Mosaic. At the time when the book was being written, the Web still existed only in the mind of Tim Berners-Lee.

[4] Approximately 40% in 2014 and probably more than half in 2025.

[5] I obviously do not claim to be the only “visionary” on the subject in the early 1990s. The pioneering work of Douglas Engelbart and Ted Nelson and the predictions of Howard Rheingold, Joël de Rosnay and many others should be cited.

[6] See The basics of IEML (on line at: http://wp.me/P3bDiO-9V )

[7] Beyond logic and statistics.

[8] IEML is the acronym for Information Economy MetaLanguage. See La grammaire d’IEML (On line http://wp.me/P3bDiO-9V ) [9] The Semantic Sphere 1: Computation, Cognition and Information Economy (London: ISTE, 2011; New York: Wiley, 2011).

[10] More than four hundred reference books.

[11] Umberto Eco, The Search for the Perfect Language (Oxford: Blackwell, 1995).

[12] “But more madness than genius would be required for such an enterprise”: Claude Levi-Strauss, The Savage Mind (University of Chicago Press, 1966), p. 130.

[13] Which is obviously true, but which only defines the problem rather than forbidding the solution.

[14] But true universalism is all-inclusive, and our daily lives are structured according to a multitude of universal standards, from space-time coordinates to HTTP on the Web. I responded at length in The Semantic Sphere to the prejudices of extremist post-modernism against scientific universality.

[15] Which is still used by a large community. But the only thing that Esperanto and IEML have in common is the fact that they are artificial languages. They have neither the same form nor the same purpose, nor the same use, which invalidates criticisms of IEML based on the criticism of Esperanto.

[16] See IEML Grammar (On line http://wp.me/P3bDiO-9V ).

[17] But, fortunately, supported by the Canada Research Chairs program and by my wife, Darcia Labrosse.

[18] Michel Serres, Hermès V. Le passage du Nord-Ouest (Paris: Minuit, 1980).

[19] The concept of episteme, which is broader than the concept of paradigm, was developed in particular by Michel Foucault in The Order of Things (New York: Pantheon, 1970) and The Archaeology of Knowledge and the Discourse on Language (New York: Pantheon, 1972).

[20] At the beginning of Book A of his Metaphysics.

[21] This is the Axial Age identified by Karl Jaspers.

[22] Book Lambda, 9

[23] In particular in Book III.

[24] See, for example, Moses Maimonides, The Guide For the Perplexed, translated into English by Michael Friedländer (New York: Cosimo Classic, 2007) (original in Arabic from the twelfth century). – Averroes (Ibn Rushd), Long Commentary on the De Anima of Aristotle, translated with introduction and notes by Richard C. Taylor (New Haven: Yale University Press, 2009) (original in Arabic from the twelfth century). – Saint Thomas Aquinas: On the Unity of the Intellect Against the Averroists (original in Latin from the thirteenth century) – Herbert A. Davidson, Alfarabi, Avicenna, and Averroes, on Intellect. Their Cosmologies, Theories of the Active Intellect, and Theories of Human Intellect (New York, Oxford: Oxford University Press, 1992). – Henri Corbin, History of Islamic Philosophy, translated by Liadain and Philip Sherrard (London: Kegan Paul, 1993). – Henri Corbin, En Islam iranien: aspects spirituels et philosophiques, 2d ed. (Paris: Gallimard, 1978), 4 vol. – De Libera, Alain Métaphysique et noétique: Albert le Grand (Paris: Vrin, 2005).

[25] In Meditations on First Philosophy, “First Meditation.” [26] Discourse on the Method, “Part IV.”

[27] At the end of The Order of Things (New York: Pantheon Books, 1970). [28] See, for example, Stéphane Vial, L’être et l’écran (Paris: PUF, 2013).